The Rise of the Agent Engineer

The prompt engineer died so Agent Engineers could thrive

Agent Engineer is a job title that doesn't formally exist yet at most companies, but I'd bet good money it will be one of the hottest roles in tech within the next two years. I want to explain why I think this is inevitable, and why the short-lived hype around Prompt Engineers actually makes my case stronger.

First, a word on job titles

Naming things is famously hard. "There are only two hard things in Computer Science: cache invalidation and naming things." Job titles are no exception. The same role at ten different companies will have ten different names, ten different job specs, and honestly, ten different day-to-day realities for the people doing the work.

So when I say "Agent Engineer," I'm less interested in the exact title and more interested in the shape of the role. The specific, compounding set of skills that are becoming genuinely necessary to build AI agent products that actually work in production. After helping over 100 companies build and deploy AI agents, I'm confident enough in that shape to put a stake in the ground.

Why I was never bullish on Prompt Engineer

When Prompt Engineer started trending, the skepticism was warranted, including mine.

The core problem was always that prompting alone doesn't build a product. It's one input into a much larger system. A great prompt won't save you if your retrieval is broken, your tool calls are unreliable, your latency is unusable, or your cost structure makes the feature unshippable at scale. Prompting is a skill, but it was never a sufficient foundation for a job.

The rise and fall of the Prompt Engineer didn't disprove the need for AI specialization. It actually clarified it. What companies discovered is that they need people who can do a lot more than write instructions for a model. They need people who understand the full stack of what an agent actually is.

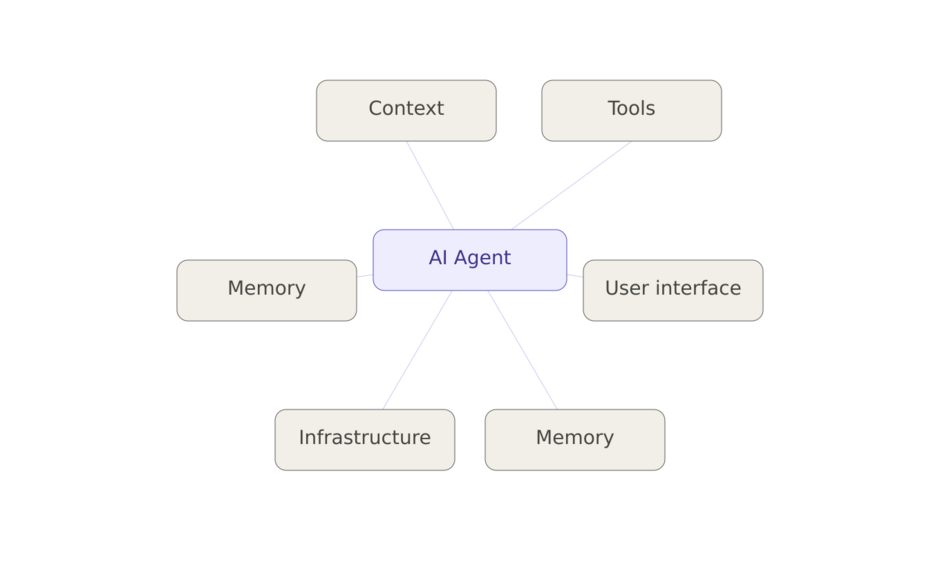

What an agent actually is

Before we talk about who should build agents, it helps to be precise about what we're building. An AI agent isn't just a chatbot with a better system prompt. It's a system with multiple interacting components, and each one has its own failure modes:

- Intelligence: the underlying model(s) making decisions and generating responses

- Tools: external functions the agent can call: APIs, databases, code execution, search

- Context: everything the agent knows at inference time: the conversation, retrieved documents, system instructions, user state

- Memory: what persists across sessions, and how it's structured and retrieved

- User Interface: how humans interact with the agent, whether that's chat, voice, buttons, or a side-by-side UI. (And yes, rendering markdown tables and images correctly inside a chat interface is not as trivial as it sounds.)

- Infrastructure: latency, reliability, concurrency, observability. The boring stuff that determines whether your agent works at 10 users or 10,000.

Each of these components is a real engineering problem. Together, they're a specialization.

The skill set that's emerging

Based on what I've seen across hundreds of companies building in this space through our product work at Voker, through my podcast “Built to Ship”, and through AI meetups, surveys, and product discovery calls, here's what I'd put in the job spec for a strong Agent Engineer:

Orchestration: designing multi-step, multi-model workflows and knowing when to chain, when to parallelize, and when to let a human step in.

Model selection: understanding the tradeoffs between frontier models vs. smaller, faster, cheaper alternatives for specific tasks. Not every step in a workflow needs the biggest model.

Context engineering: this is the evolved form of prompt engineering. It includes how you structure system prompts, how you design RAG pipelines, and how you manage what goes into the context window at every turn.

Token cost optimization: caching strategies, prompt compression, model routing. At scale, a poorly optimized agent can be 10x more expensive than it needs to be.

Tool creation and maintenance: building reliable integrations, handling failures gracefully, and keeping tools working as the systems they connect to change.

Agent harnessing: the art of shaping agent behavior: getting consistent outputs, handling edge cases, building guardrails that don't kill the user experience.

Analytics and evals: the feedback loop that separates teams that ship once and hope for the best from teams that continuously improve. Knowing how to measure what your agent is actually doing in production is non-negotiable.

Staying current: the techniques that were best practice six months ago may already be outdated. Agent Engineers have to treat research as part of the job. At Voker, we recently launched Beyond Benchmarks, an Applied Agent Research series from our Agent Engineering Lab, where we dig into exactly the kind of topics Agent Engineers need to stay sharp on; from the infinite context window promise of RLMs, to hierarchical text classification with LLMs, to whether LLM judges play favorites in evals. More in that series to come.

And underneath all of this, the fundamentals still matter: system design, platform engineering, product thinking. Agent Engineering is Software Engineering, with a specialized layer on top.

This isn't a side project for your existing engineers

Here's what I keep hearing from the PMs, engineers, and executives I talk to: they're all further behind than the hype suggests. Most companies are still running pilots. They have one or two agent features in some form of limited production, and they're realizing that turning those pilots into real product lines is a much heavier lift than expected.

The teams making real progress aren't just giving their existing engineers an Anthropic API key and wishing them luck. They're investing in dedicated people who have gone deep on this problem space. As agent-powered products mature, the job market around them will mature too, and the Agent Engineer will become as recognizable a role as the DevOps Engineer or the ML Engineer before it.

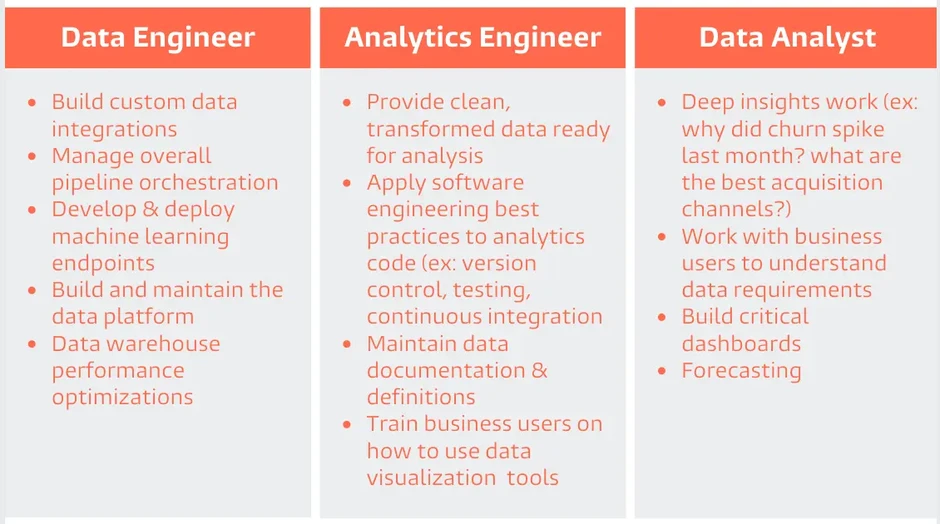

The dbt parallel

If you want a recent analogue, look at what happened with Analytics Engineering. Before dbt, data transformation work fell into a gray zone between data analysts and data engineers. Neither owned it cleanly, and it mostly got done badly. dbt created enough structure and tooling around the work that a distinct role crystallized: the Analytics Engineer.

The conditions that made that happen are showing up again. The problem space is well-defined. The tooling is maturing. The work is too important and too complex to stay a side responsibility. The Agent Engineer is the Analytics Engineer of this cycle.

If you're already convinced and want to start hiring, we've put together an Agent Engineer job description template you can adapt and post today. And if you want the full hiring kit (interview questions and a technical loop template), comment, email or BF dm me and I'll send it over.

If there are key skills or responsibilities for Agent Engineers that you think I missed, let me know in the comments.

More from the Blog

Agent Analytics FAQ

Everything you need to know to start measuring what your AI agent actually does

The State of YC AI Agents (2026)

Most teams have agents in production. Almost none know if they're actually working

.svg)

.svg)